When I started my new gig a little over a month ago, one of the first problems I wanted to tackle was the flow of work. My team does a lot, and some of the work is very structured and repetitive, whereas other work is much more fluid. It’s really two teams with different foci; they run different sync meetings, and had different work intake processes but they were sharing a board.

The board was a typical software-development style kanban board; board columns included things like:

- Ready – User stories pulled out of backlog based on business priorities

- In Progress – work that was ongoing

- On Hold – work that was waiting for other team input

- Validate – Work that needed sign-off from someone

- Closed – recently “done” items

For a fluid SRE team where the project work can be ambiguous, a flow like this is OK (with some caveats that I’ll explain below); In Progress is a “squishy” state for work; you don’t know what the steps are, or how long it’s going to take at a glance. However, SRE work can be “squishy”; you don’t know if you’re going to be writing a script to automate a technical process or helping coordinate a large scale project to implement SLO’s for a new service. Having a card sitting In Progress is just a visual reminder of a task list.

For structured, repeatable work, though, this style of board has limitations; if the steps of being In Progress are known, then you can highlight obstacles in your process by teasing them out. The regulated work team doesn’t know how often projects come in, but when they do, they followed a series of steps for every project (no matter how much data was involved. There was also a lot of regular back-and-forth between this team and client teams early in the process; using the old board, cards were constantly moving back and forth between In Progress and On Hold. It made WIP limits on the board very difficult to track.

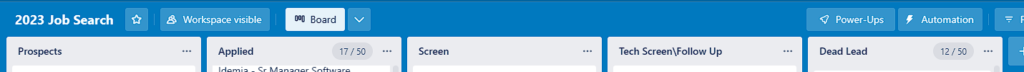

We spun up a new board to better represent the flow, focusing on replacing the concept of In Progress with more specific states tailored to the flow. We also identified the primary constraint(s) for each state, be it Client, Team Member, or Systems:

- Ready: Projects (not single stories) pulled from the backlog in terms of priority (Client)

- Requirements: A discussion phase with clients to finalize the expectations (Client\Team Member)

- Prep: Project scripts are developed; data is gathered. (Team Member)

- Produce: Actual work is in-flight (Systems)

- Analysis: Write-up from project (Team Member)

- Validate – Work that needed sign-off from someone (Client)

- Closed – recently “done” items

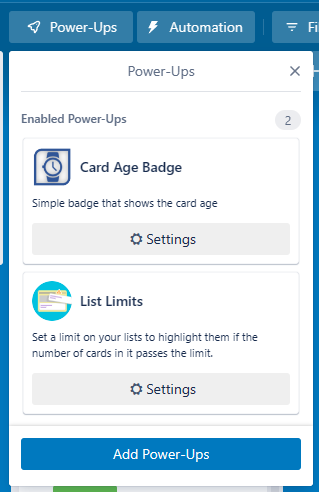

Note that WIP can now be defined for multiple states (Prep, Produce, and Analysis); each of these states are governed by a limited number of resources; team members can only have 1 project in the Analysis state at a time, for example. Note also that the cards represent the entire project, not just a task inside a project. It’s an assembly line mentality, and allows us to quickly see where constraints are acting as a bottleneck.

With the regulated workflow removed from the original board, it let us focus on some rules for the fluid SRE work (the caveats referenced above). One of the basic decisions needed for a kanban flow is what does a card represent? For assembly lines, a unit of work could be the whole project, but for fluid software development efforts, it gets really soft.

We decided to go with a timebox method; a unit of work should take no more than a day of focused work. A card could be really small or reasonably large, but if you think a project will take more than a day, then split the work out. This has psychological advantages; it’s far better to see things getting marked as “done” than to just watch a card sit in the In Progress column for weeks.

Another rule we implemented was transferring ownership of the card in the Validate phases back to the original requester. We then started a clock; if a card sat in the Validate phase for more than 7 days, we flipped the ownership back to the team member, and closed the card.

So far, the team seems to be working well with the two new improvements; after 30 days, we should have enough data to see where we can continue to improve the flow.