So after my last post, my developer friend came back to me and noted that I hadn’t really demonstrated the situation we had discussed; our work was a little more challenging than the sample script I had provided. In contrast to what I previously posted, the challenge was to delete nodes where a sub-node contained an attribute of interest. Let me repost the same sample code as an illustration:

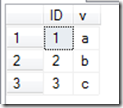

DECLARE @X XML = '<root> <class teacher="Smith" grade="5"> <student name="Ainsworth" /> <student name="Miller" /> </class> <class teacher="Jones" grade="5"> <student name="Davis" /> <student name="Mark" /> </class> <class teacher="Smith" grade="4"> <student name="Baker" /> <student name="Smith" /> </class> </root>' SELECT @x

If I wanted to delete the class nodes which contain a student node with a name of “Miller”, there are a couple of ways to do it; the first method involves two passes:

SET @X.modify('delete /root/class//.[@name = "Miller"]/../*') SET @X.modify('delete /root/class[not (node())]') SELECT @x

In this case, we walk the axis and find a node test of class (/root/class); we then apply a predicate to look for an attribute of name with a value of Miller ([@name=”Miller”]) in any node below the node of class (//.). We then walk back up a node (/..), and delete all subnodes (/*).

That leaves us with an XML document that has three nodes for class, one of which is empty (the first one). We then have to do a second pass through the XML document to delete any class node that does not have nodes below it (/root/class[not (node())]).

The second method accomplishes the same thing in a single pass:

SET @x.modify('delete /root/class[student/@name="Miller"]') SELECT @x

In this case, walk the axis to class (/root/class), and then apply a predicate that looks for a node of student with an attribute of name with a value of Miller ([student/@name=”Miller”); the difference in this syntax is that the pointer for the context of the delete statement is left at the specific class as opposed to stepping down a node, and then back up.

SQL Server Hekaton, Microsoft’s new In-Memory table technology being shipped as part of SQL Server 2014, will completely change the way you think about data management. As a DBA, you’ll need to analyze your memory and storage needs completely differently. All Hekaton data is always stored in memory, and the data stored on disk is basically just a REDO log used to regenerate the contents of your memory-optimized tables. In this full-day seminar, Kalen Delaney (a SQL Server MVP for over 20 years) will show you the in-memory architecture for your Hekaton data and indexes, and discuss what gets written to disk during checkpoints, as well as what gets logged. She will explain how the recovery process recreates your Hekaton tables. Finally, she’ll go into detail on just what it is that makes Hekaton so much FASTER!

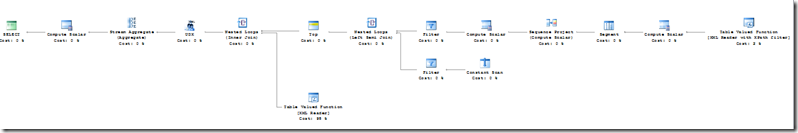

SQL Server Hekaton, Microsoft’s new In-Memory table technology being shipped as part of SQL Server 2014, will completely change the way you think about data management. As a DBA, you’ll need to analyze your memory and storage needs completely differently. All Hekaton data is always stored in memory, and the data stored on disk is basically just a REDO log used to regenerate the contents of your memory-optimized tables. In this full-day seminar, Kalen Delaney (a SQL Server MVP for over 20 years) will show you the in-memory architecture for your Hekaton data and indexes, and discuss what gets written to disk during checkpoints, as well as what gets logged. She will explain how the recovery process recreates your Hekaton tables. Finally, she’ll go into detail on just what it is that makes Hekaton so much FASTER!  In this session you will learn about SQL Server 2008 R2 and SQL Server 2012 performance tuning and optimization. Industry Expert Denny Cherry will guide you through tools and best practices for tuning queries and improving performance within Microsoft SQL Server. This session will guide you through real life performance problems which have been gathered and tuned using industry standard best practices and real world skills.

In this session you will learn about SQL Server 2008 R2 and SQL Server 2012 performance tuning and optimization. Industry Expert Denny Cherry will guide you through tools and best practices for tuning queries and improving performance within Microsoft SQL Server. This session will guide you through real life performance problems which have been gathered and tuned using industry standard best practices and real world skills.  The chances are that your organization has a centralized data repository, such as ODS or a data warehouse, but you might not use it to the fullest. Join this insightful full-day event to understand the importance of having a semantic layer that bridges users and data. In the Microsoft BI world, BISM consists of Power Pivot, Tabular, and Multidimensional.

The chances are that your organization has a centralized data repository, such as ODS or a data warehouse, but you might not use it to the fullest. Join this insightful full-day event to understand the importance of having a semantic layer that bridges users and data. In the Microsoft BI world, BISM consists of Power Pivot, Tabular, and Multidimensional.