So, as usual, I’m struggling to sit down and write; I know I should (it’s good for the soul), but frankly, I’m struggling to put words to web page. As a method of jumpstarting my brain, I thought I would write about something simple and relevant to what I’m doing these days in my primary capacity: management.

When I took over the reins of a newly formed department of DBA’s, I knew that I needed to do something quick to demonstrate the value of our department to our recently re-organized company. We’re a small division in our company, but we manage some relatively large databases (17 TB of data, with a relatively high daily change rate; approximately 10 TB of data change daily). My team was comprised of senior DBA’s who were inundated with support requests (from “I need to know this information” to “I need help cleaning up this client’s data”); while they had monitoring structures in place, it wasn’t uncommon for things to go unnoticed (unplanned database growth, poor performing queries, etc.). One of my first acts as a manager was to put in a system of classification I called MARS; all of our work as DBA’s needed to be categorized into one of four broad groups.

Maintenance, Architecture, Research, and Support

The premise is simple; by categorizing efforts, we could measure where the focus of our department was, and begin to allocate resources into the proper arenas. I defined each of the four areas of work as such:

- Maintenance – the efforts needed to keep the system performing well; backups, security, pro-active query tuning, and general monitoring are examples.

- Architecture – work associated with the deployment of new features, functionality, or hardware; data sizing estimates, upgrades to SQL 2012, installation of Analysis services are examples. To be honest, Infrastructure may have been a better term, but MIRS sounded stupid.

- Research – the efforts to understand and improve employee skills; I’m a former teacher, and I put a pretty high value on lifelong learning. I want my team to be recognized as experts, and the only way that can happen is if the expectation is there for them to learn.

- Support – the 800 lb gorilla in our shop; support efforts focus on incident management (to use ITIL terms) and problem resolution. Support is usually instigated by some other group; for example, sales may request a contact list from our CRM that they can’t get through the interface, or we may get asked to explain why a ticket didn’t get generated for a customer.

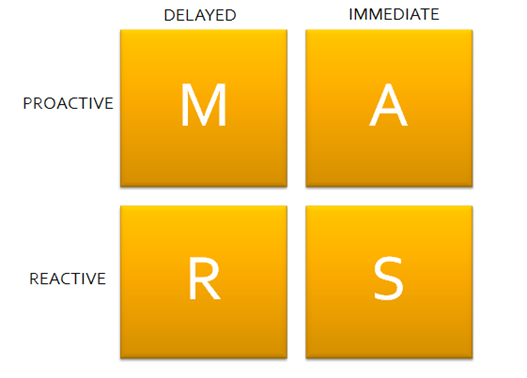

After about a year of data gathering, I went back and thought about the categories a bit more, and realized that I could associate some descriptive adjectives with the workload to demonstrate where the heart of our efforts lies. I took my cues from the JoHari window, and came up with the two axes: Actions: Proactive – Reactive, and Results: Delayed-Immediate. I then arranged my four categories along those lines, like so:

In other words, Maintenance was Proactive, but had Delayed results (you need to monitor your system for a while before you grasp the full impact of changes). Research was more Reactive, because we tend to research issues that are spawned by some stimulus (“what’s the best way to implement Analysis Services in a clustered environment?” came up as a Research topic because we have a pending BI project).

Immediate results came from Architectural changes; adding more spindles to our SAN changed our performance quickly, but there was Proactive planning involved before we made the change. Support is Reactive, but has Immediate results; the expectation is that support issues get prioritized, so we try to resolve those quickly as part of our Operational Level Agreements with other departments.

After a couple of months looking at our work load (using Kanban), I see that we still spend a lot of time in Support, but that effort is trending downward; I continue to push Maintenance, Architecture, and Research over Support, and we’re becoming much more proactive in our approaches. I’m not sure if this quadrant approach is the best way to represent workload, but it does give me a general rule-of thumb in helping guide our efforts.

Stuart, do you tag your tickets so you can see the time allocation, or is it less formal? I like the diagram and adjectives.

Yeah, we try to track cycle time by category, but it’s less accurate than I’d like. The problem is that we don’t track actual time spent on a ticket, so I can only really use open date to close date as my metric. Since tickets don’t have a comparable weight (a manual backup of database appears to take the same amount of time as a one-off query), it’s tricky to do anything other than monitor trends. I can say that we have more cards open in support than maintenance, and that it takes us roughly 2 days to close a support ticket (as opposed to 4 days for a maintenance ticket), but I can’t definitely say one takes more time than the other.